Data Engineer | Python Developer | Systems Thinking Advocate

Data Engineer with hands-on experience building ETL pipelines, processing data for analytics (GCP/BigQuery), and developing LLM-driven automated workflows with caching, structured outputs (JSON), and cost-optimized API usage.

Backed by 10+ years of engineering experience in complex system design (Dipl.-Ing.), I successfully transitioned into Data Engineering, applying strong systems thinking to modern data architectures with a focus on modular architecture, reliability, and automation. My focus is on building stable, scalable, and business-oriented solutions by combining classical engineering principles with modern Python and data tooling. Experience building end-to-end automated pipelines (ingestion → scoring → generation → persistence) with LLM integration and containerized deployment.

1. ThecoreGrid.dev | AI-Driven Tech Media Hub & Automated Data Pipeline

- Role: Founder & Sole Developer

- Stack: Python, SQLite, Linux (systemd), OpenAI API.

- Highlights: Designed and built a headless microservice architecture with a fully automated content pipeline (RSS ingestion → LLM-based scoring → transformation → translation → direct database injection via WP-CLI). Implemented a Flask-based webhook layer for decoupled service communication and an AIOps watchdog for basic system monitoring.

- → Reduced manual publication time by ~80%.

- ➡️ View the Architecture Showcase Repository

2. Centralized Marketing Data Warehouse & NLP SEO Tools

- Context: B2B E-Commerce Platform (Let'sGastro)

- Highlights: Built a centralized Marketing Data Warehouse in BigQuery by developing Python-based ETL pipelines for GA4 and API data ingestion.

- → Reduced manual reporting effort by 90%.

- Developed a Python tool (KeyBERT, SpaCy) for automated keyword synonym generation from messy product data.

- → Improved internal search coverage by ~70%.

- ➡️ View the NLP Keyword Extraction Pipeline

3. AI-Chatbot for Regional Tourism

- Context: Cooperation with TU Berlin

- Stack: Prompt Architecture, JSON Structuring, Context Engineering.

- Highlights: Aggregated and cleaned datasets for a regional tourism platform to prepare structured knowledge bases for chatbot integration. Designed multi-level dialogue logic (focusing strictly on semantic data structuring and prompt engineering, without backend coding) to ensure response consistency within LLM constraints.

4. Automated 404 Error Analysis Tool (SEO / GSC)

- Context: E-Commerce Platform (~15k products)

- Stack: Python, Pandas, NumPy, BeautifulSoup, Cloudscraper.

- Highlights: Developed a data analysis script to identify root causes of 404 errors at scale using Google Search Console exports. The tool enriches URLs with validation layers (HTTP status, sitemap presence, product database lookup) to detect recurring structural patterns.

- → Reduced error analysis time by ~50%.

- ➡️ View the 404-Error Analysis Tool

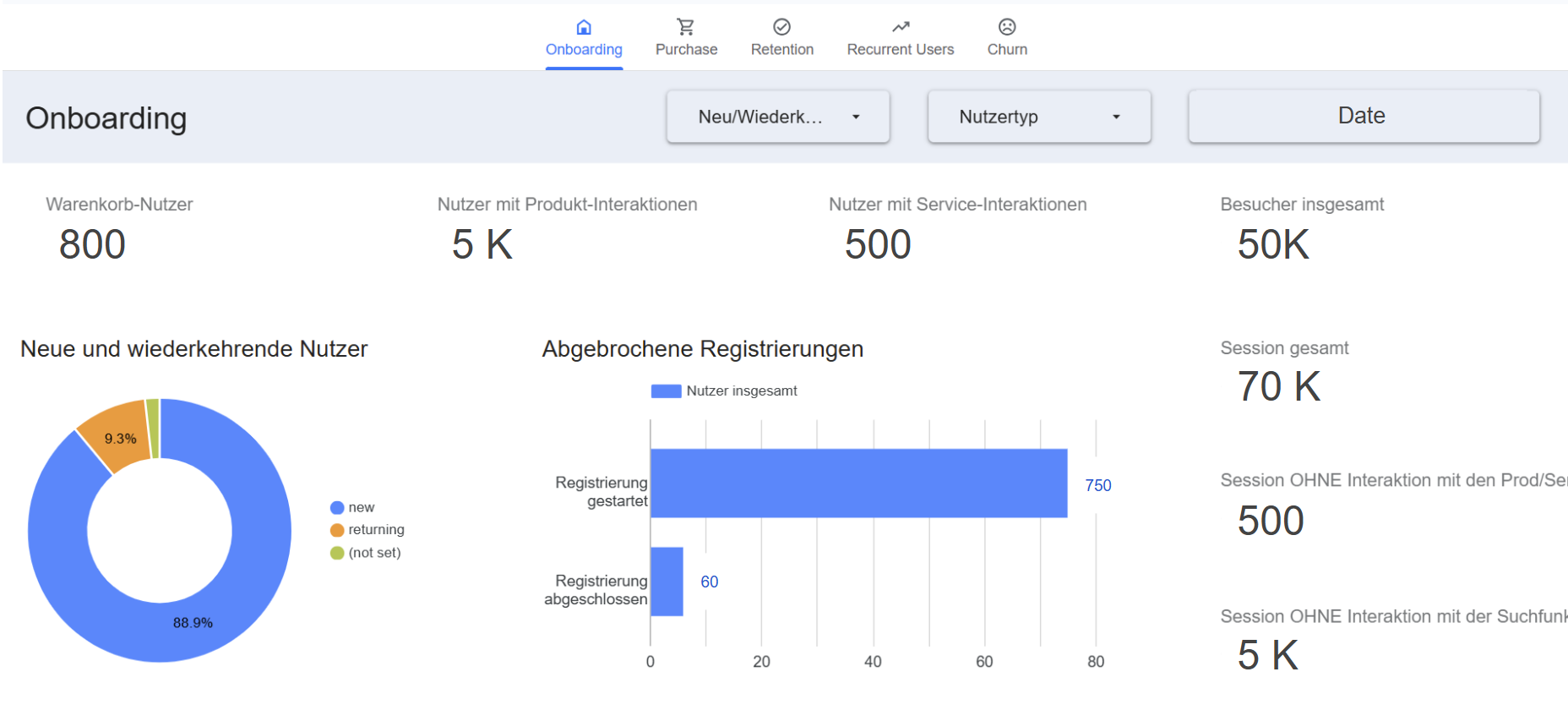

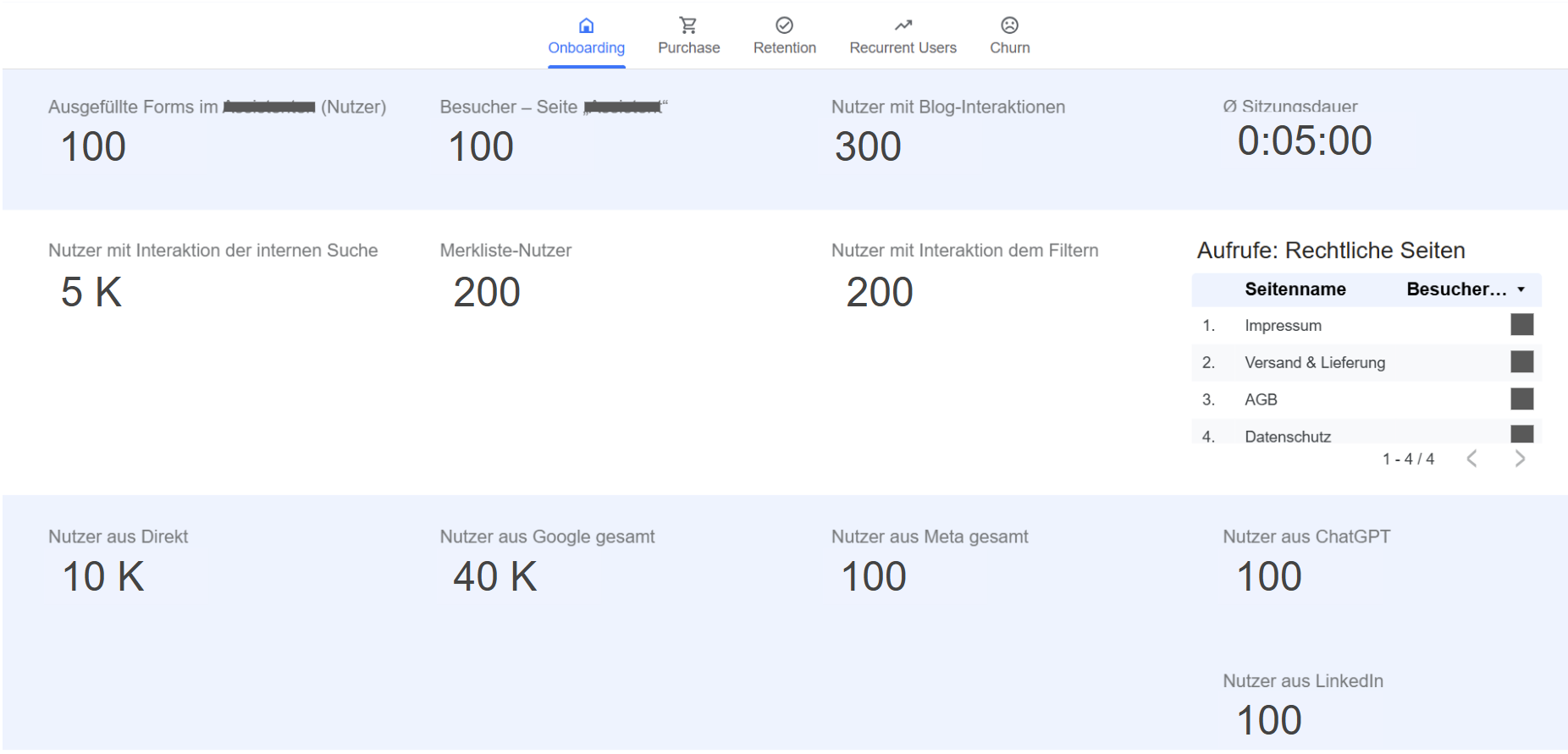

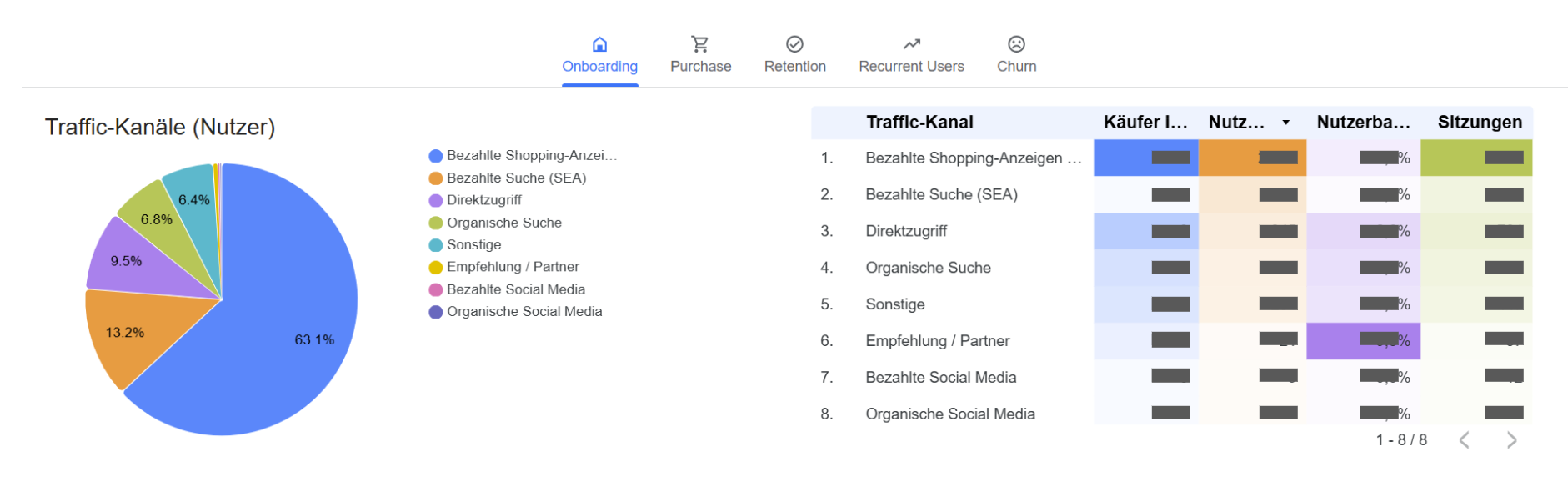

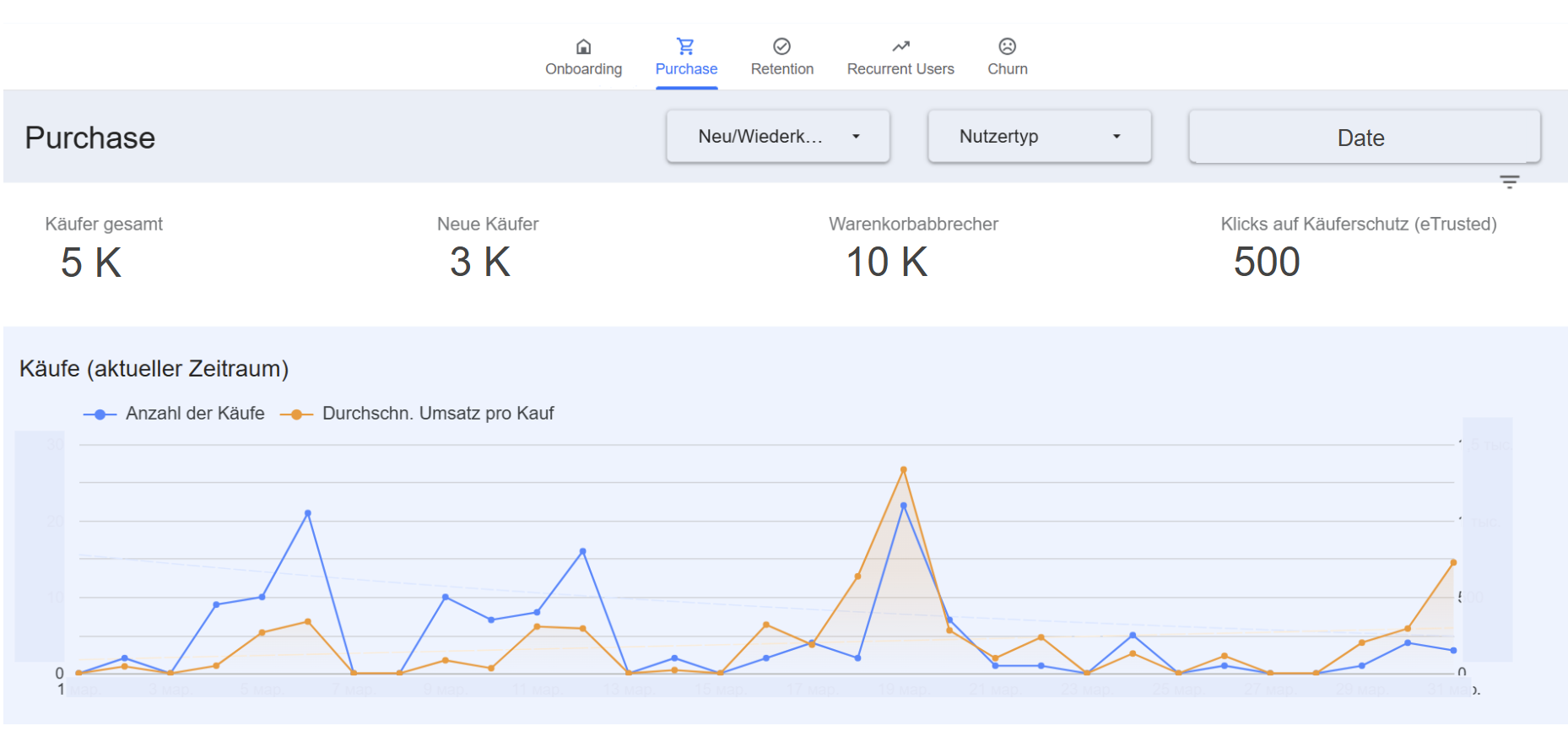

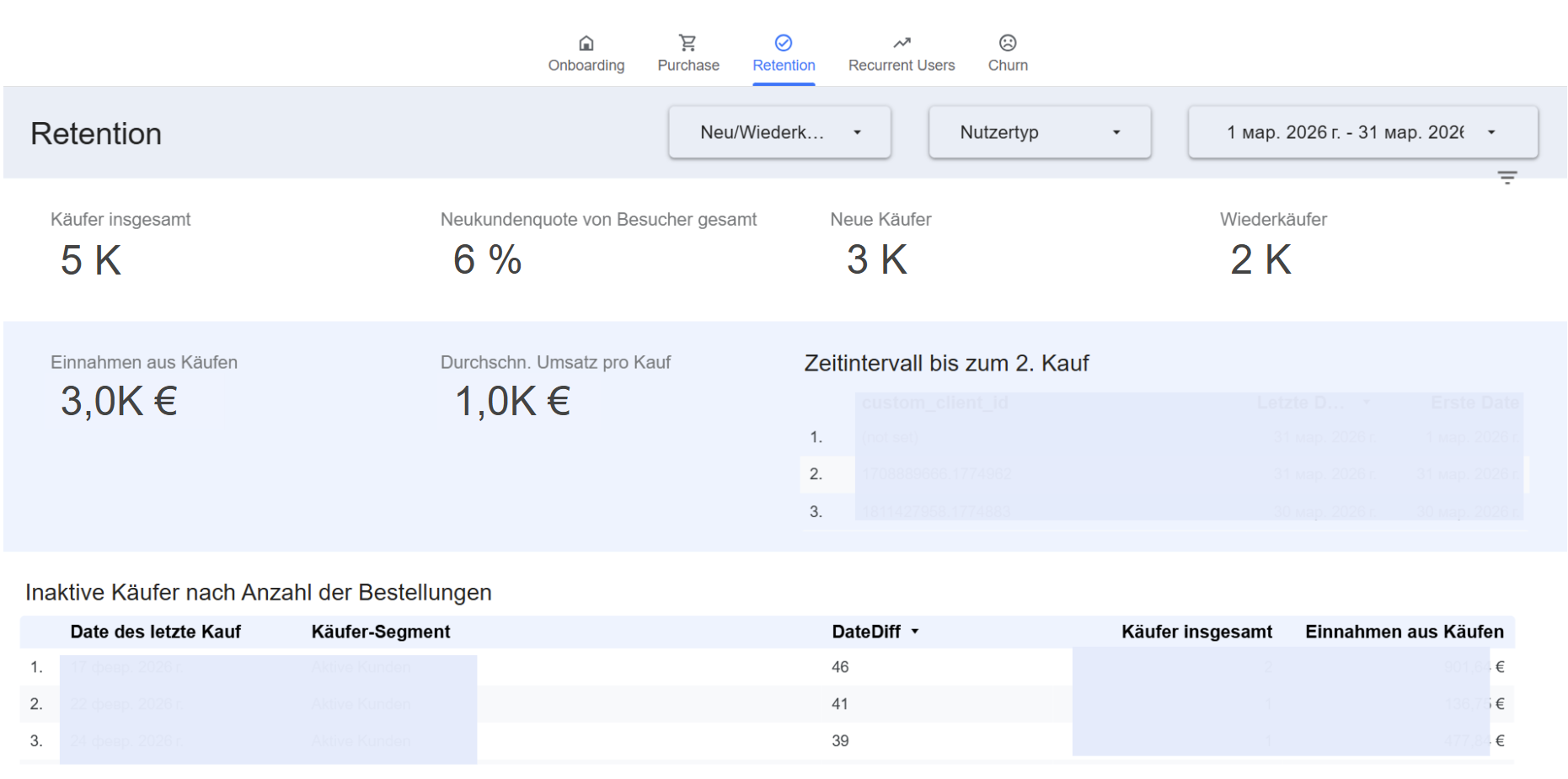

5. Customer Journey & LTV Analytics Dashboard (Looker Studio)

- Context: B2B E-Commerce Platform (Let's Gastro)

- Highlights: Designed and deployed a comprehensive BI dashboard to track the entire user lifecycle (Onboarding, Purchase, Retention, Churn).

- Integrated custom tracking (

custom_client_id) to bypass standard GA4 limitations, enabling cross-session LTV (Lifetime Value) and cohort analysis for repeat buyers. - Visualized critical bottlenecks in the sales funnel (e.g., interaction with internal search vs. checkout drop-offs).

- → Empowered C-level management with real-time, data-driven insights into marketing efficiency and user retention metrics.

- (Note: Screenshots display sanitized mock data to comply with NDA)

👀 Click to expand: BI Dashboard Preview (Sanitized)

1. Onboarding & Search Funnel |

2. Conversion & Event Metrics |

3. Acquisition & Traffic Heatmap |

4. Purchase & Checkout Flow |

5. Retention & LTV Analysis |

|

6. AI Job Agent — Autonomous Application Pipeline (Additional Project)

- Stack: Python, OpenAI API, Docker, Google Sheets API, Pandas.

- Highlights: Designed and implemented an end-to-end automated pipeline for job search automation (multi-source ingestion via APIs/RSS → LLM-based scoring → dynamic content generation → persistence).

- Built a modular AI agent with language detection (EN/DE), structured JSON outputs, and template-based document generation (CV + cover letters).

- Implemented a local caching layer to reduce API costs and avoid redundant processing.

- Containerized the application with Docker and designed it for scheduled execution (cron-based automation).

- → Demonstrates end-to-end pipeline design, LLM integration, and production-oriented system thinking.

- ➡️ View the Repository

- LinkedIn: Anna Grid (Insert your actual link here)

- Location: Berlin, Germany 🇩🇪